Rise by Six: Your Daily Dose of Inspiration

Explore insights and stories that elevate your day.

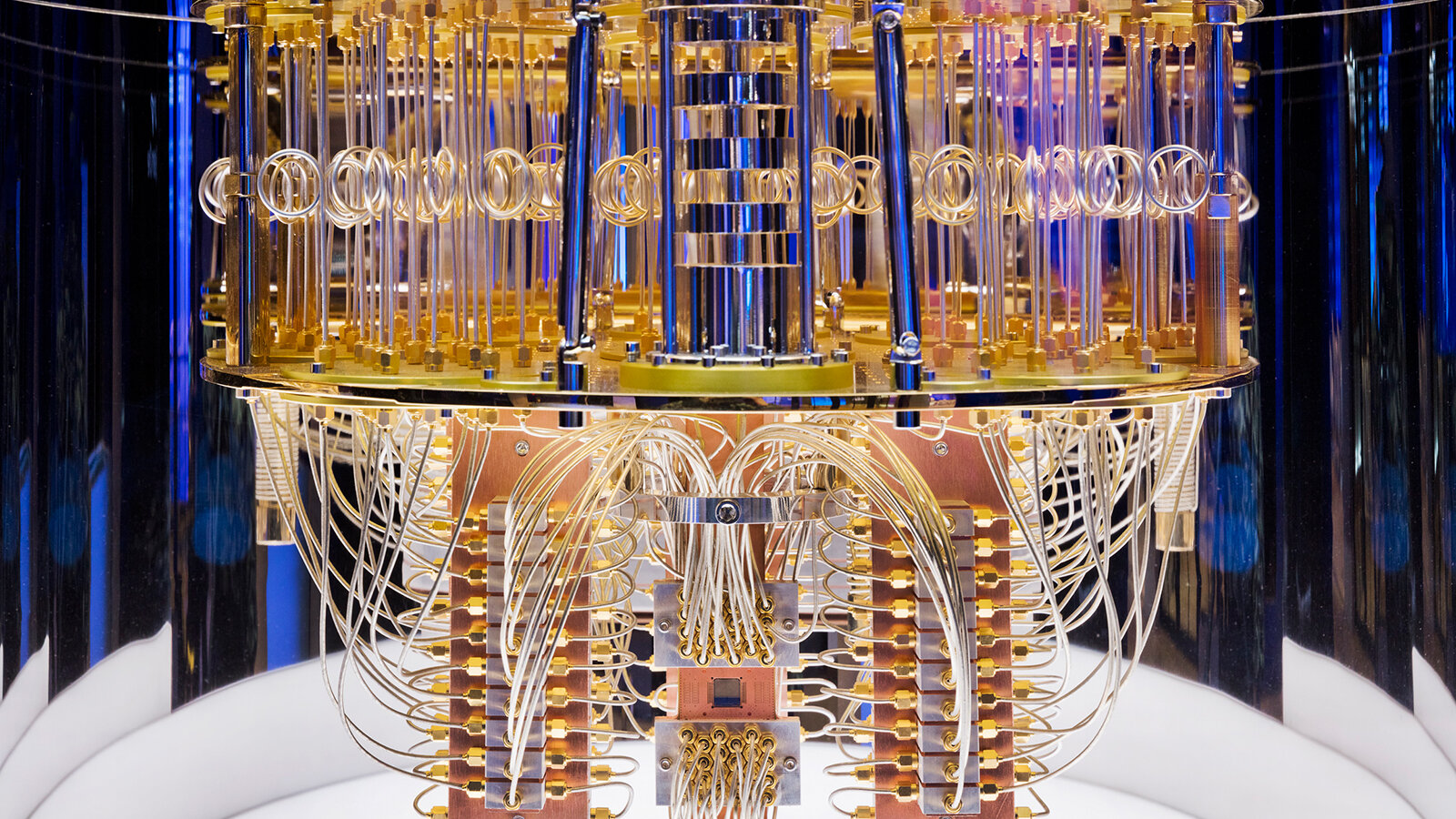

Quantum Computing: A Game Changer or Just a Fizzle?

Uncover the truth behind quantum computing: revolutionary breakthrough or overhyped fad? Dive in and decide for yourself!

Understanding Quantum Computing: The Basics Explained

Quantum computing is a revolutionary technology that harnesses the principles of quantum mechanics to process information in ways that classical computers cannot. Unlike traditional bits, which exist in a state of 0 or 1, quantum bits, or qubits, can exist in multiple states simultaneously, thanks to a phenomenon known as superposition. This ability allows quantum computers to perform complex calculations at unprecedented speeds, making them ideal for solving problems in fields such as cryptography, drug discovery, and artificial intelligence. As research in quantum computing advances, understanding its foundational concepts is essential for anyone looking to grasp its potential impact on our world.

To better understand quantum computing, consider these key concepts: superposition, entanglement, and quantum gates.

- Superposition enables qubits to be in multiple states at once, allowing them to process a vast amount of data simultaneously.

- Entanglement refers to the unique connection between qubits, where the state of one qubit can instantaneously influence the state of another, regardless of the distance separating them.

- Quantum gates are the basic building blocks of quantum algorithms, manipulating qubits through various operations similar to logic gates in classical computers.

Is Quantum Computing the Future of Technology? Debunking Myths and Facts

The realm of quantum computing has been both captivating and mystifying, stirring up a flurry of discussions around its potential to revolutionize technology. Many myths circulate regarding this cutting-edge field, with some people believing that quantum computers will soon outstrip classical computers in every conceivable task. However, the truth is more nuanced. While quantum computers excel in specific applications such as cryptography and complex simulations, they are not a one-size-fits-all solution. The current state of quantum technology is primarily experimental, and significant hurdles remain before it can be integrated into mainstream technology.

Furthermore, despite the hype surrounding quantum computing, it's crucial to separate fact from fiction. One persistent myth is that quantum computers will render traditional computers obsolete. In reality, traditional computers will continue to play a vital role, particularly for routine tasks and applications that do not require immense computational power. Instead of replacing existing technologies, quantum computing is more likely to complement them, opening new avenues for innovation. As we delve deeper into this enchanting field, it is essential to maintain a balanced perspective, recognizing both the profound possibilities and the current limitations of quantum technology.

What Are the Real-World Applications of Quantum Computing?

Quantum computing has the potential to revolutionize various fields by solving problems that are currently intractable for classical computers. One of the most promising applications is in cryptography, where quantum algorithms can break traditional encryption methods, thus prompting the need for advanced quantum-resistant algorithms. Additionally, quantum computing can optimize complex logistical operations, such as those found in supply chain management. This ability to process vast amounts of data simultaneously can enhance routing efficiency, cutting costs and improving service delivery.

Another significant application is in drug discovery and materials science. By simulating molecular interactions at a quantum level, researchers can uncover new compounds and understand chemical reactions more deeply than through classical simulations. Furthermore, quantum computing can be applied to machine learning algorithms, enabling faster data processing and more accurate predictions, which can be invaluable in fields ranging from finance to healthcare. As quantum technology continues to develop, its real-world applications are poised to create a substantial impact across multiple industries.