Rise by Six: Your Daily Dose of Inspiration

Explore insights and stories that elevate your day.

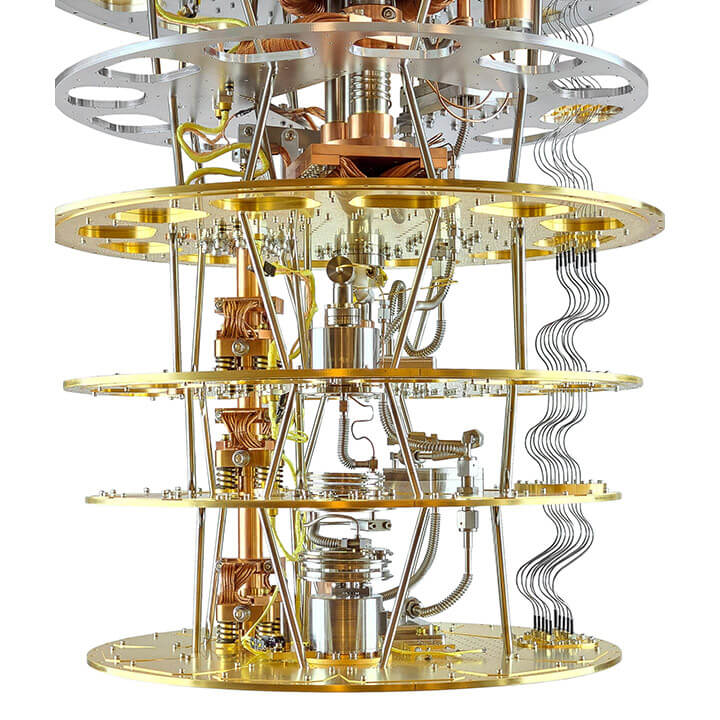

Quantum Computing: The Next Frontier of Digital Wizardry

Unlock the mysteries of quantum computing! Discover how this digital revolution is reshaping technology and redefining reality.

What is Quantum Computing and How Does It Work?

Quantum computing is a revolutionary approach to processing information that exploits the principles of quantum mechanics. Unlike classical computers, which use bits as the smallest unit of data, quantum computers use qubits. Qubits can exist in multiple states at once, thanks to a phenomenon known as superposition. This allows quantum computers to perform complex calculations at an unparalleled speed. Furthermore, qubits can be interconnected through a process called entanglement, enabling them to share information instantaneously, which is a critical feature that enhances computational power.

The operational foundation of quantum computing lies in quantum gates, which manipulate the states of qubits. These gates work similarly to classical logic gates but can process a vast array of possibilities simultaneously. To put it simply, while a traditional computer might evaluate a problem linearly, a quantum computer can explore a multitude of potential solutions at once. As researchers continue to uncover the potential applications of quantum computing, fields such as cryptography, drug discovery, and complex system simulations stand to benefit immensely from these advancements.

The Potential Impact of Quantum Computing on Future Technologies

The advent of quantum computing marks a paradigm shift in computational capabilities that could redefine many aspects of technology. Its ability to process information at unprecedented speeds enables the solving of complex problems that are currently insurmountable for classical computers. For instance, in fields such as drug discovery, quantum algorithms can simulate molecular interactions in ways that traditional computing cannot, potentially leading to breakthroughs in pharmaceuticals. Moreover, industries like finance and logistics stand to benefit significantly from quantum-optimized calculations, enhancing decision-making processes and resource management.

Furthermore, the implications of quantum computing extend beyond mere speed; they also challenge existing protocols regarding cybersecurity. As quantum computers become more commonplace, they could render traditional encryption methods obsolete, prompting a necessary shift towards quantum encryption technologies that leverage the principles of quantum mechanics to secure data. Additionally, advancements in quantum networking could revolutionize how systems connect and communicate, paving the way for a decentralized web that is resistant to tampering and surveillance. The future is indeed bright for technologies influenced by quantum computing, promising both remarkable advancements and profound challenges.

Quantum vs Classical Computing: Understanding the Key Differences

Quantum computing and classical computing represent two fundamentally different approaches to processing information. Classical computing relies on bits as the basic unit of data, where each bit can be in one of two states: 0 or 1. This binary system allows classical computers to perform a wide array of calculations and tasks, but they can struggle with complex problems that require significant computational power. On the other hand, quantum computing utilizes quantum bits, or qubits, which can exist in multiple states simultaneously due to the principles of superposition and entanglement. This enables quantum computers to perform complex calculations at an exponentially faster rate than classical computers, particularly for specific tasks such as cryptography and optimization.

The key differences between quantum and classical computing extend beyond their fundamental units of data. Here are a few critical distinctions:

- Performance: Quantum computers can handle large datasets and complex calculations faster than their classical counterparts.

- Algorithms: Quantum algorithms, like Shor's and Grover's, exploit quantum mechanics to solve problems that are currently infeasible for classical computers.

- Applications: While classical computers dominate everyday tasks and applications, quantum computers are expected to revolutionize fields such as drug discovery, material science, and secure communication.

Understanding these differences is essential as we venture further into the realms of advanced computational technology.