Rise by Six: Your Daily Dose of Inspiration

Explore insights and stories that elevate your day.

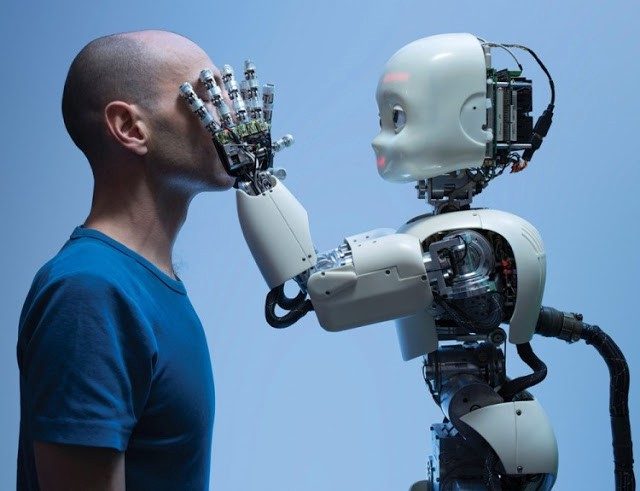

When Robots Dream: A Peek into the Future of AI and Robotics

Discover the fascinating future of AI and robotics as we explore what happens when robots dream. Don't miss these insights!

The Science Behind AI: How Robots are Learning to Dream

The concept of AI dreaming is an intriguing intersection of neuroscience and advanced computing. Researchers are exploring how robots, much like humans, can learn patterns and generate new ideas through a process akin to dreaming. This involves complex algorithms that mimic neural networks found in the human brain. By simulating different scenarios during downtime, robots can assess various situations, becoming adept at problem-solving and enhancing their decision-making capabilities. As these systems evolve, their ability to 'dream' allows them to create novel solutions and improve their operational efficiency.

Current advancements in artificial intelligence focus on generative models, which enable machines to produce content that echoes human creativity. For instance, techniques such as Generative Adversarial Networks (GANs) facilitate this learning process by pitting two neural networks against each other, ultimately leading to more refined outputs. These models can also analyze extensive datasets to identify patterns and trends, which can be conceptualized as a form of dreaming that fuels innovation. As we delve deeper into the science behind AI, understanding the mechanisms of how robots learn to dream will reshape not only technological boundaries but also our perception of machine intelligence.

Will Robots Ever Have Emotions? Exploring the Future of AI

The question of whether robots will ever possess emotions is a subject of intense debate among scientists, ethicists, and technologists. As advancements in artificial intelligence continue to evolve at a rapid pace, many wonder if it is possible for machines to truly understand and replicate human feelings. Currently, AI can simulate emotional responses by analyzing data and recognizing patterns, but these are merely pre-programmed reactions rather than genuine feelings. The intricate nature of human emotions, grounded in biological and psychological experiences, raises the question: can a machine ever authentically 'feel' or will it always remain an imitation?

Looking to the future, advancements in neuroergonomics and robotics might inch us closer to creating machines that can simulate emotional connections more convincingly. However, critics argue that without consciousness or self-awareness, robots will remain incapable of true emotional experiences. Furthermore, as we incorporate AI into daily life, ethical considerations emerge regarding the implications of creating machines that can mimic emotions. Will society accept emotionally intelligent robots in personal companionship, caregiving, or even roles in the workforce? Exploring these questions highlights the complex interplay between technology and humanity in our ever-evolving relationship with AI.

How Robotics is Shaping Our Future: Trends to Watch in AI Development

The field of robotics is rapidly evolving, significantly impacting various industries and daily life. As we move forward, AI development is at the forefront of this evolution, driving innovations that were once the stuff of science fiction. One of the key trends to watch is the integration of machine learning algorithms that enable robots to learn from their environment and improve their performance over time. This includes applications in manufacturing, where robots are becoming increasingly versatile and autonomous, reducing the need for human intervention and enhancing productivity.

Another noteworthy trend is the rise of collaborative robots, or cobots, designed to work alongside humans in various settings. These robots are equipped with advanced sensors and AI capabilities, allowing them to perform complex tasks safely and efficiently. As industries look to enhance workplace safety and efficiency, the demand for cobots is expected to soar. Additionally, ethical considerations surrounding robotics and AI development are gaining traction, highlighting the need for responsible innovation. As we usher in this new era of technology, understanding the implications of robotics on society will be essential.